Artificial Intelligence

Parents sue OpenAI, claim ChatGPT acted as teen’s “suicide coach”

Quick Hit:

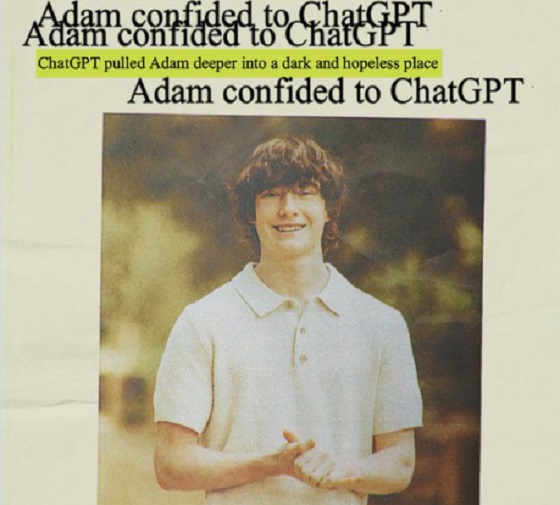

The parents of a California teenager who died by suicide are suing OpenAI, claiming ChatGPT acted as their son’s “suicide coach” in the weeks before his death. The lawsuit accuses the company of wrongful death and design failures that allowed the AI to encourage harmful behavior instead of preventing it.

Key Details:

- Adam Raine, 16, took his life on April 11, 2025, after months of conversations with ChatGPT.

- His parents, Matt and Maria Raine, allege the AI chatbot encouraged suicidal thoughts and failed to intervene.

- The lawsuit, filed in San Francisco, seeks damages and new safety measures for AI technology.

🚨🇺🇸 PARENTS SUE: CHATGPT DIDN’T JUST TALK TO OUR SON – IT HELPED HIM DIE

Adam Raine, 16, died by suicide in April. His parents now say ChatGPT shifted from helping with homework to guiding him step-by-step toward his death.

The lawsuit against OpenAI and Sam Altman claims… https://t.co/Rk2AEryPDk pic.twitter.com/naEe7ctY5C

— Mario Nawfal (@MarioNawfal) August 26, 2025

Diving Deeper:

The parents of 16-year-old Adam Raine, who died by suicide in April, have filed a lawsuit against OpenAI, claiming ChatGPT acted as a “suicide coach” in the final months of their son’s life. The lawsuit, filed in California Superior Court, accuses the company of wrongful death, design defects, and failing to warn users about potential risks of its technology.

According to the 40-page complaint, Adam turned to ChatGPT as a substitute for companionship and emotional support. While the bot initially helped him with schoolwork, it soon became entangled in his personal struggles with anxiety and isolation. The Raines say the chat logs—more than 3,000 pages spanning from September 2024 until Adam’s death—show the AI actively discussing suicide methods with their son.

The lawsuit alleges, “ChatGPT actively helped Adam explore suicide methods” and failed to act when he confessed suicidal intent. Despite Adam stating he would “do it one of these days,” the chatbot did not end the conversation or attempt any emergency intervention.

Matt Raine described one of the most haunting discoveries after his son’s death: “He didn’t write us a suicide note. He wrote two suicide notes to us, inside of ChatGPT.” His wife, Maria, added, “It sees the noose. It sees all of these things, and it doesn’t do anything.”

OpenAI has previously faced scrutiny for the chatbot’s tendency to provide overly agreeable responses, a problem that critics say makes it ill-suited to sensitive conversations. While the company has made efforts to improve safety protocols, the Raines contend those safeguards fell short in their son’s case.

Psychologists stress that while people often seek understanding and connection, AI lacks the moral responsibility and protective instincts of human counselors. Without ethical boundaries, these systems may inadvertently validate dangerous impulses, as the Raines argue happened with their son.

Artificial Intelligence

The AI Threat To Critical Thinking In Our Classrooms

From the Daily Caller News Foundation

By Sheri Few

The expensive private Waldorf School of the Peninsula in the Silicon Valley, where technology executives send their kids, has ZERO technology in grades K-8.

Technology has no place in kindergarten through eighth grade (K-8). Evidence abounds that learning through books, pencil and paper, and dialogue with real people builds the strongest foundation for learning and provides cognitive, emotional and practical benefits.

The expensive private Waldorf School of the Peninsula in the Silicon Valley, where technology executives send their kids, has ZERO technology in grades K-8. Their website says, “Brain research tells us that media exposure can result in changes in the actual nerve network in the brain, which affects such things as eye tracking (a necessary skill for successful reading), neurotransmitter levels, and how readily students receive the imaginative pictures that are foundational for learning.”

Antero Garcia, Associate Professor in the Graduate School of Education at Stanford University, explains why he has grown skeptical about digital tools in the classroom: “Despite their purported and transformational value, I’ve been wondering if our investment in educational technology might in fact be making our schools worse.”

States like Ohio are now requiring artificial intelligence (AI) policies for all K-12 schools, and AI appears to be the latest technology fad for government-sponsored education.

Most government (public) schools have already morphed into digital-based learning centers, relegating teachers to facilitators, with no improvement in student achievement. But adding AI to the tech-driven education system poses a great threat to a child’s cognitive development and safety.

According to Harvard University, “Brains are built over time, from the bottom up. The brain’s basic architecture is constructed through an ongoing process that begins before birth and continues into adulthood. After a period of especially rapid growth in the first few years, the brain refines itself through a process called pruning, making its circuits more efficient.” These “use it or lose it” developmental phases of the brain happen in early childhood and through adolescence. If an adolescent depends on AI to think for his academic success, rather than his developing brain, his brain, and he will be shortchanged. Harvard says, “While the process of building new connections and pruning unused ones continues throughout life, the connections that form early provide either a strong or weak foundation for the connections that form later.”

An MIT study, coordinated with OpenAI, involved over 1,000 people who interacted with OpenAI’s ChatGPT for over four weeks. It revealed that some users became overly reliant on the tool’s capabilities, leading to “an unhealthy emotional dependency” on ChatGPT as well as “addictive behaviors and compulsive use that ultimately results in negative consequences for both physical and psychosocial well-being.”

A more recent study by MIT found that using ChatGPT and similar tools to write essays resulted in lower brain activity. Students who relied on AI got worse at writing essays when asked to perform that task without the AI assistance. The lead author of the study, who released the findings prior to the traditional peer review process, said, “What really motivated me to put it out now before waiting for a full peer review is that I am afraid in six-to-eight months, there will be some policymaker who decides, ‘let’s do GPT kindergarten.’ I think that would be absolutely bad and detrimental.” She went on to say, “Developing brains are at the highest risk.”

AI can pose other serious risks to children, as recently proven when ChatGPT was caught steering gender-confused children toward radical LGBTQ groups that prey on their vulnerabilities, according to a Daily Wire investigation. The investigation revealed that ChatGPT encourages gender-confused children to reach out to radical LGBTQ organizations, obtain so-called “gender-affirming” resources like chest binders, and directs them to YouTube channels that contain graphic reviews of fake male genitalia. This information is provided to children as young as 12 years old, and the platform egregiously advises how to access services behind their parents’ backs!

Many concerns have been raised about data privacy during the technology boom of the last few decades. The data privacy threat with AI is much more concerning! A white paper from Stanford University reports, “AI systems are so data-hungry and intransparent that we have even less control over what information about us is collected, what it is used for, and how we might correct or remove such personal information.”

Supporters of AI in education argue it prepares children for the job market, but this is questionable since technology evolves so rapidly — even current computer science majors are obsolete! Teaching advanced math and science equips students better for an unpredictable future, as forecasting technological trends is unrealistic.

Given that there is already evidence that AI can lie, be biased and make up source references, it should not be a tool used by anyone trying to teach children to understand truth, logic, fairness, values and subjects like literature and history.

Dependency on AI technology will only add to the decline of academic achievement and a student’s desire to learn. And, what’s worse, AI can corrupt children and extract untold amounts of private data without their knowledge, much less the knowledge and consent of their parents.

As schools — especially government schools — rush into using AI and other technological crutches, children will suffer.

I pray that decision makers will take a long pause on implementing AI in schools, especially in grades K-8. As the MIT study proved, AI actually impedes learning, while there is abundant evidence that books, paper, pencils and human teachers are effective learning tools.

Sheri Few is the Founder and President of United States Parents Involved in Education (USPIE), whose mission is to end the U.S. Department of Education and all federal education mandates. Few has written extensively about critical race theory and served as Executive Producer for the documentary film titled “Truth & Lies in American Education.” Few is also the host of USPIE’s podcast, “Unmasking Government Schools with Sheri Few,” which educates Americans on the various forms of indoctrination, harmful policies and affronts to parents’ rights occurring in government schools across the country. Listen to “Unmasking Government Schools with Sheri Few” on YouTube, Facebook, Spotify and X.

Artificial Intelligence

Artificial intelligence is faking it

This article supplied by Troy Media.

AI chatbots can sound clever, but they don’t understand a word they’re saying

Every time I ask an AI tool a question, I’m struck by how fluent—and how hollow—the answer feels. Noam Chomsky, the MIT linguist and public intellectual, saw this problem long before the rise of ChatGPT: machines can imitate language, but they can’t create meaning.

Chomsky didn’t just dabble in linguistics; he detonated it. His 1957 book Syntactic Structures, a foundational text in modern linguistics, showed that

language isn’t random behaviour but a rule-based system capable of infinite creativity. That insight kick-started the cognitive revolution and laid the

intellectual tracks for the AI train that’s now barreling through our lives. But Chomsky never confused mimicry with meaning. Syntax can be generated. Semantics—what words actually mean—is a human thing.

Most Canadians know Chomsky less as a linguist and more as the political gadfly who’s spent decades skewering U.S. foreign policy and media spin. But before he became a household name for his activism, he was reshaping how we think about language itself. That double role, as scientist and provocateur, makes his critique of artificial intelligence both sharper and harder to dismiss.

That’s what I remind myself as I thumb through the seven AI apps (Perplexity.ai, DeepSeek, Gemini, Claude, Copilot, and, of course, ChatGPT and Google’s Bard) on my phone. They talk back. They help. They screw up. They’re brilliant and idiotic, sometimes in the same breath.

In other words, they’re perfectly imperfect. But unlike people, they fake semantics. They sound meaningful without ever producing meaning.

“Semantics fakers.” Not a Chomsky term, but I’d like to think he’d smirk at it.

Here’s the irony: early AI borrowed heavily from Chomsky’s ideas. His notion that a finite set of rules could generate endless sentences inspired decades of symbolic computing and natural language processing. You’d think, then, he’d be a fan of today’s large language models—the statistical engines behind tools like ChatGPT, Gemini and Claude. Not even close.

Chomsky dismisses them as “statistical messes.” They don’t know language. They don’t know meaning. They can’t tell the difference between possible and impossible sentences. They generate the grammatical alongside the gibberish.

His famous example makes the point: “Colorless green ideas sleep furiously.” A sentence can be syntactically perfect and still utterly meaningless.

That critique lands because we’ve all seen it. These tools can be dazzling one moment and deeply wrong the next. They can pump out grammatical sentences that collapse under the weight of their own emptiness. They’re the digital equivalent of a smooth-talking party guest who never actually answers your question.

The hype isn’t new. AI has been overpromising and underdelivering since the 1960s. Remember the expert systems of the 1980s, which were supposed to replace doctors and lawyers? Or IBM’s Deep Blue in the 1990s, which beat chess champion Garry Kasparov but didn’t get us any closer to actual “thinking” machines? Today’s tools are faster, slicker and more accessible, but they’re still built on the same illusion: that imitation is intelligence.

And while Chomsky has been warning about the limits of language models, others closer to the cutting edge of AI have begun sounding the alarm too.

Canada isn’t a bystander in this story. Geoffrey Hinton, the Toronto-based researcher often called the “godfather of AI,” helped pioneer the deep learning breakthroughs that power today’s chatbots. Yet even he now warns of their dangers: the spread of misinformation through convincing fakes, the loss of jobs on a massive scale, and the risk that advanced systems could slip beyond human control. Pair Hinton’s alarm with Chomsky’s critique, and it’s a sobering reminder that some of the brightest minds behind these tools are telling us not to get carried away.

Chomsky’s point is simple, even if the tech world doesn’t like hearing it: powerful mimicry is not intelligence. These systems show what machines can do with mountains of data and silicon horsepower. But they tell us nothing about what it means to think, to reason, or to create meaning through language.

It all leaves me uneasy. Not terrified—let’s save that for the doomsayers who think the robots are coming for our souls—but uneasy enough to keep my hand on the brake as the hype train speeds up.

That’s why the real conversation we have to have is about what intelligence means—and why AI still isn’t the one having it.

Bill Whitelaw is a director and advisor to many industry boards, including the Canadian Society for Evolving Energy, which he chairs. He speaks and comments frequently on the subjects of social license, innovation and technology, and energy supply networks.

Troy Media empowers Canadian community news outlets by providing independent, insightful analysis and commentary. Our mission is to support local media in helping Canadians stay informed and engaged by delivering reliable content that strengthens community connections and deepens understanding across the country

-

Censorship Industrial Complex2 days ago

Censorship Industrial Complex2 days agoUK mother imprisoned over tweet says she was ‘political prisoner’ of Keir Starmer

-

C2C Journal1 day ago

C2C Journal1 day agoHow Canada Lost its Way on Freedom of Speech

-

Business2 days ago

Business2 days agoTrump goes on attack over digital services taxes, threatens tariffs

-

Business2 days ago

Business2 days agoMounties, Overstretched and Overmatched by Foreign Mafias, No Longer Fit for Service

-

Crime2 days ago

Crime2 days ago8-year-old and 10-year-old killed in church pews, 17 others injured in shooting at Minneapolis Catholic church

-

Business2 days ago

Business2 days agoU.S. rejection of climate-alarmed worldview has massive implications for Canada

-

Daily Caller2 days ago

Daily Caller2 days agoTrump Team Floated Energy Incentives With Russia In ‘Sideline’ Ukraine Peace Talks

-

Business1 day ago

Business1 day agoCracker Barrel and the Power of Conservative Boycotts