By Frank Wright

If we do not limit the freedom of reach of AI now, we will have neither liberty nor security. The digital world is already here. Who will watch whom, and according to whose rules? With the World Economic Forum, you get policed by liberal extremists.

The real-world influence of the World Economic Forum (WEF) is certainly waning – which may explain a fresh report of its push towards digital globalism.

A white paper published by the WEF last November is a roadmap for a transition from the real to the virtual world. This transition is not only about methods of governing, of course.

It means the mass migration of humanity into a virtual world.

As the document says, the World Economic Forum is calling for “global collaboration” to “redefine the norms” of a future digital state, which it calls “the metaverse.”

Merging online and offline

Titled “Shared Commitments in a Blended Reality: Advancing Governance in the Future Internet,” this agenda presumes a borderless reality for humans in which “online and offline” are merged.

As usual, there is a disturbing method in the diabolical madness of the WEF. Saying that the required technology has already arrived, it urges “aligning global standards and policies of internet governance” to moderate our increasingly digital lives.

Yet this is not about policing online speech. It is about ruling the new “blended reality.”

Mentioning mobile phones, virtual reality and the refinement of artificial intelligence in predicting and reproducing human activity, the WEF report states: “These technologies are blurring the line between online and offline lives, creating new challenges and opportunities … that require a coordinated approach from stakeholders for effective governance.”

Stakes and their holders

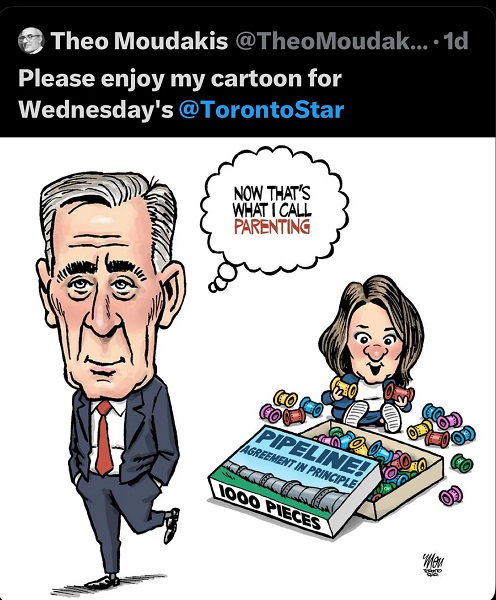

Yet the people holding the stakes in this online and offline game of life are not only globalists like Schwab and Soros. The vampire hunters of populism are all strong critics of globalism – the replacement of all nation states with a single world government.

Populists like Donald Trump are also seeking to drive a stake through the globalist liberal agenda, described as “LGBT, open borders, and war” by Hungary’s pro-family populist leader Viktor Orbán.

It would seem that the WEF’s dream of digital globalism may be terminally interrupted by the new software running through the machinery of power.

Yet digital globalism is not the only game in town.

Amidst the welcome relief and tremendous hope sparked in the West by Trump’s “Common Sense Revolution,” there is a devil in the details of the death of the liberal order.

The algorithm of power is not going anywhere. It is here, now, and it is simply a question of how far it goes.

Digital globalism, or national digitalism?

Digital globalism may simply be swapped for national digitalism – government by algorithm in one country. Its values are not liberal, which is a change. Yet neither are the values of China, where a form of digitalism has been long established.

It is worthwhile taking a look at the community whose guidelines may rule your “online and offline” life in the absence of those of the globalists.

Here is an announcement from one globalist “datagarch,” Oracle’s Larry Ellison, one of the billionaires whose monopoly of your data enriched their lives at the expense of the capture of yours. Ellison says “citizens will be on their best behavior” with an all-pervasive AI surveillance system.

Oracle’s founder CEO has said a government powered by AI could make everyone safer – because everyone would be under permanent surveillance. Comforting, isn’t it?

Ellison was named after his place of arrival in the U.S. – Ellis Island. In 2017 he donated $16 million to the Israeli army, calling Israel “our home.”

Wikipedia states, “As of January 20, 2025, he is the fourth-wealthiest person in the world, according to Bloomberg Billionaires Index, with an estimated net worth of US$188 billion, and the second wealthiest in the world according to Forbes, with an estimated net worth of $237 billion.”

In 2021, he offered Benjamin Netanyahu a “lucrative position on the board of Oracle.” That seems to partly help understand why Netanyahu, with such friends in very high places, has such an extraordinary influence on almost every single member of the U.S. Congress and Senate.

Ellison’s Oracle was named after a database he created for the CIA, in his first major programming project. In fact, “the CIA made Larry Ellison a billionaire,” as Business Insider reported.

What kind of values inspire his vision of digital governance? His biography supplies one answer:

“Ellison says that his fondness for Israel is not connected to religious sentiments but rather due to the innovative spirit of Israelis in the technology sector.”

Israel has a massive, lucrative, military-industrial complex and related software industry as revealed in “The Palestine Laboratory: How Israel exported its occupation to the world“ by Antony Loewenstein, one of many Israeli Jews who have become highly critical of the surveillance industry.

Israel’s “innovation” includes the use of predictive AI to identify, target and kill people, and systems like Pegasus – which can enter literally any phone or computer undetected and read everything. It is an astonishingly powerful program that sells for a high price and earns Israel a lot of income.

The company which makes the “no click spyware” Pegasus is called NSO. This Israeli company was sanctioned by the U.S. in 2021 to prevent its undetectable intrusion into phones and computers being used on Americans by any company, or agency, which buys it.

On January 10, an Israeli report said that Donald Trump’s Gaza ceasefire deal could see these sanctions lifted.

Do you buy the idea that this will make you safe? Do you think AI will be effective? Ellison thinks so. He says AI can produce “new mRNA vaccines in 48 hours to cure cancer.”

Do you want to live in his world?

Buyer beware

Buyer – beware. The algorithm of digital power is here, and it is powered by data mined from your life.

People like Oracle’s Ellison, Palantir’s Alex Karp, Facebook’s Mark Zuckerberg, and Google’s Larry Page and Sergey Brin are all data miners. So is X’s Elon Musk – who is the only one of the data oligarchs warning you that AI needs to be controlled by humans – and not the other way around.

Two forms of digital tyranny

So what are the dangers? Under the “metaverse” proposed by the WEF, your life can be partnered with a “digital twin.”

This is the symbiotic merger of human with machine presented as the vision of our future by Klaus Schwab and the digital globalists.

Of course, your online life can be suspended or even ended if you violate the community guidelines. These rules are not written by people who agree with you.

Some people you may agree with are proposing quite the reverse. Under the algorithm of the “national digigarchy” – you will be watched, recorded, filed, and assessed for the potential commission of future crimes. You will be free to say what you like online, but depending on what you say, maybe only the algorithm will see you.

And what it sees it will never forget.

Limiting the reach of AI

If we do not limit the freedom of reach of artificial intelligence now, we will have neither liberty nor security.

The digital world is already here. Who will watch whom, and according to whose rules? With the World Economic Forum, you get policed by liberal extremists. You will be free to agree with Net Zero, degeneracy, denationalization, and a diet of meat-like treats supplied to the wipe-clean mausoleum in which you will cleanly and efficiently live.

Yet the alternative emerging also says that the rule of machines will make everything safe and effective.

Safe and effective AI?

Alex Karp sells his all-seeing Palantir as the only guarantee of public safety. He also says your secrets are safe with him – because he is “a deviant” who might like to take drugs or have an affair.

After years of crisis manufactured by policy, and with the West sick of liberal insanity, this moment of tremendous relief contains a serious threat. More people than ever have the number of the globalists, and it is not a number most faithful Christians would want to call.

People generally have seen what the WEF is selling, and they are not buying it. The danger presented by the likes of Schwab is now out in the open, shouting the quiet part out loud.

As liberal-globalist bureaucracies like these become more isolated in the Trump Revolution, they will fight for their lives. In doing so, they are displaying their true intentions. This is the only thing they can do to survive.

Everyone will see what is really on offer, few will want this devil’s bargain, and so the business model will go bust.

Yet this is not the only dangerous game being played with your life.

Beware the specter at the feast

The data miners whose programs refine the algorithm of power are selling you a new digital reality. They are telling you that it will make you safe – because everyone will be watched, forever, by machines which have no values and no heart at all, whether liberal or otherwise.

If we are not watching out, no one will notice that the new algorithm of digital power has simply been limited to the West.

In Shakespeare’s play it was the guilty man, Macbeth, who saw the specter at the feast he held for his coronation.

The ghost in the machine is not dead. The danger is that the innocent may not see it or may foolishly not want to see it. Yet it sees you. This is the algorithm of power, and for now – but not for long – we still have the power to say who it watches – and where.